How to load JSON data in PostgreSQL with the COPY command

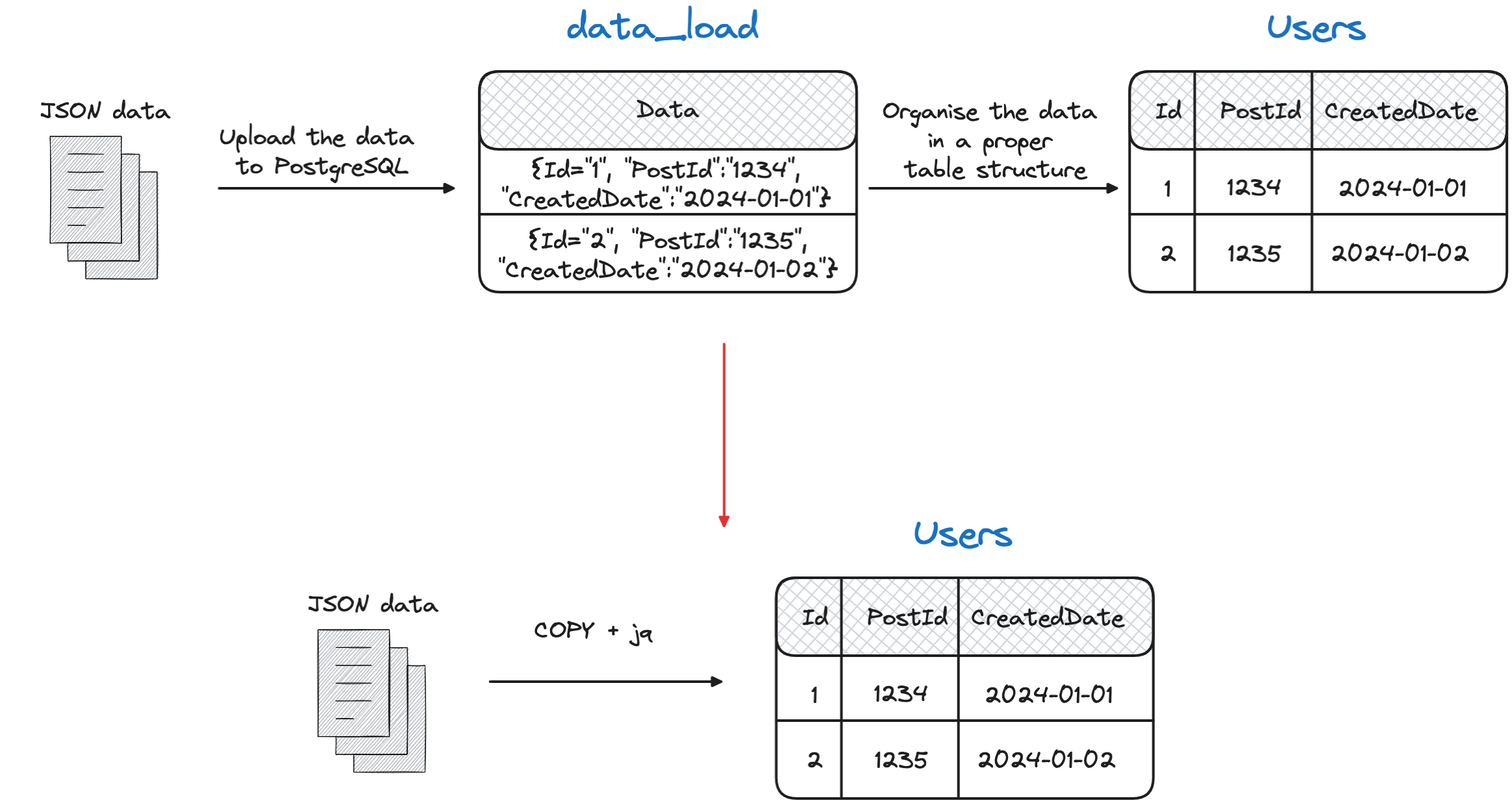

- 4 minutes read - 748 wordsYou have a JSON dataset that you want to upload to a PostgreSQL table containing properly formatted rows and columns… How do you do it?

All the main sources like my own blog and others tell you to load the JSON in a dedicated temporary table containing a unique JSON column, then parse it and load into the target table. However there could be another way, avoiding the temp table!

In this blog we’ll see how to upload the JSON directly using PostgreSQL COPY command and using an utility called jq!

PostgreSQL COPY command

First of all, let’s check PostgreSQL COPY command. It’s a command that allows you to copy into a PostgreSQL table data from a file, there are two versions:

COPYif the file is sitting in the PG server already\COPYif the file is sitting in the client machine connected to the server viapsql

In both cases, the standard COPY command has the following minimal set of parameters:

\copy <TARGET TABLE>(<OPTIONAL LIST OF COLUMNS>) FROM <SOURCE FILE> <FORMAT>

Where:

<TARGET TABLE>is the name of the target table(<OPTIONAL LIST OF COLUMNS>)defines the columns in the table to load<SOURCE FILE>is pointing to the source file to load<FORMAT>defines the format of the data

The full list of parameters is available in the PostgreSQL COPY documentation

PostgreSQL COPY command - the out of the box formats

Let’s focus on the formats available in the PostgreSQL COPY documentation are listed:

TEXT: can be used to load a full textCSV: to load comma (or otherwise separated) valuesBINARY: to load binary files

Unfortunately there doesn’t seem to be an out of the box way to load JSON files!

The PROGRAM option to the rescue!

A, maybe not known, option of the COPY command is to point to a program to execute instead of the file. This option can be called with the following command:

\copy <TARGET TABLE>(<OPTIONAL LIST OF COLUMNS>) FROM PROGRAM "<SET OF INSTRUCTIONS>"

Compared to the previous \copy call, this time we are adding the PROGRAM with a set of instructions delimited by quotes or double quotes that will be executed on the client machine before loading the data.

If you are using the

COPYcommand on the server, you’ll probably need a superuser. This is the error message shown in Aiven:ERROR: must be superuser or have privileges of the pg_read_server_files role to COPY from a file

So, what we can do, is to reshape the data before loading it.

jq - the indispensable JSON parsing tool

I’ve been using jq quite a while in a lot of blog posts, it’s a very handy tool to parse, reshape, select JSON documents. For the purpose of this blog, we’ll use to reshape the JSON input into a CSV format, digestible from the PostgreSQL COPY command.

You need to have jq installed on the workstation from where the

COPYcommand is executed!

Let’s create a basic JSON file with named test.json with the following content:

{

"id":1,

"mystring":"ciao"

}

{

"id":2,

"mystring":"sole"

}

{

"id":3,

"mystring":"mare"

}

With jq we can read and reshape the above JSON to a CSV format with:

more test.json | jq -r ". | [.id, .mystring] | @csv"

In the above command:

more test.jsonreads the filejq -rprints the raw output- the first

.selects all the elements at the root level | [.id, .mystring]retrieves theidandmystringkeys from each element|@csvsets the output format as CSV

The output is:

1,"ciao"

2,"sole"

3,"mare"

To check the complete set of options available with jq please view the manual

Stitching all together

So, how can we load a target table with just 1 COPY command? Let’s first create the target table with:

CREATE TABLE MYTARGETTABLE (id serial, myid int, mystring text);

We now want to load the myid and mystring columns of the MYTARGETTABLE table with the following COPY command reading from the test.json and applying the transformation with jq.

\copy MYTARGETTABLE(myid, mystring)

FROM PROGRAM 'more test.json | jq -r ". | [.id, .mystring] | @csv"'

CSV

The output is the data properly loaded in the MYTARGETTABLE table

id | myid | mystring

----+------+----------

1 | 1 | ciao

2 | 2 | sole

3 | 3 | mare

(3 rows)

Solving problems at the source sometimes is useful to avoid extra hops! Stitching together COPY (with PROGRAM) and jq provides us the flexibility to load JSON files without intermediary tables.